“Why, sometimes I’ve believed as many as six impossible things before breakfast.”

— Carroll

I was reading today about the BitTorrent protocol. From one moment to the next, I ended up reading about DHT networks.

You have a .torrent file that’s a small metadata blob encoded in Bencode (JSON’s ugly stepbrother).

The following JSON

{

"image": {

"src": "Images/Sun.png",

"name": "sun1",

"hOffset": 250,

"vOffset": 250,

"alignment": "center"

}

}

converts to the following Bencode

d5:imaged9:alignment6:center7:hOffseti250e4:name4:sun13:src14:Images/Sun.png7:vOffseti250eee

It contains:

- an info dictionary with filenames, sizes, and — critically — the file split into fixed-size pieces (typically 256KB–2MB each), with a SHA-1/256 hash per piece

- an announce URL which is the tracker’s address

- (optionally) DHT nodes, web seeds, etc

A magnet link is just a URI containing the info hash. Now, every node in the DHT table has a node ID. To find peers for an info hash, you do iterative lookups: ask the nodes closest to the info hash until you converge on nodes that have actually seen peers for it. That “closest” in a DHT is a very clever mathematical trick that’s at the heart of what I’m about to write — the XOR metric. You use it on the IDs, and that creates a consistent notion of distance in that ID space.

If you want an intuition, it goes like this. You have a giant phone book, but nobody owns it — every person in the city has a few pages. When you want someone’s number, you don’t go to one central office. Instead, you ask around. You start by asking whoever lives closest to where that person’s name would alphabetically appear. They might not have the exact page, but they know someone who’s a little closer. You ask that person. They point you to someone even closer. You keep hopping until you land on someone who has the actual entry. Typically you converge to the thing you’re looking for in about 6–8 hops.

This post is about a connection between peer-to-peer routing and 19th-century number theory, explained from scratch.

Before anything else, forget everything you know about distance. Let’s build it from the ground up. So, what even is a distance?

A distance function \(d(x, y)\) is just a rule that assigns a number to any pair of points. The only requirements are three common-sense rules:

- Identity: \(d(x, x) = 0\); a point is zero distance from itself.

- Symmetry: \(d(x, y) = d(y, x)\); distance doesn’t depend on direction.

- Triangle inequality: \(d(x, z) \le d(x, y) + d(y, z)\); going through a detour is never shorter.

That’s it. Any rule satisfying these three is a valid distance – a metric, more precisely. You can have wild, exotic metrics that look nothing like a ruler.

Let’s mess with the 3rd rule. What about this: instead of “the detour is no shorter,” I demand “the detour is no shorter than either leg alone”:

\[d(x, z) \le \max(d(x, y),\, d(y, z)) \tag{1}\]This is called the ultrametric inequality. It’s strictly stronger — any ultrametric is a metric, but not vice versa. And it has a bizarre geometric consequence: every triangle is isosceles, with the two equal sides being the longest ones. No scalene triangles. Ever.

That might sound like a curiosity, but it isn’t. Any metric satisfying this rule has a completely alien geometry — and XOR has it.

Before we move on, why do you think it’s alien?

What I love about simple things is that the more you stare at them the weirder they get. There’s no difference here. The trick above violates the intuitions that every other metric space you’ve ever worked in quietly assumes. Let’s consider the Euclidean space. In it, nearness is a spectrum. If \(x\) and \(z\) are close, you can usually find a \(y\) that’s “between” them: \(d(x,y) + d(y,z) = d(x,z)\), a midpoint. Thus, closeness is continuous: if you move \(x\) a little (to \(x + \varepsilon\)), the distance \(d(x + \varepsilon, y)\) can’t jump by more than \(d(x, x + \varepsilon) = \varepsilon\) relative to \(d(x, y)\), the original distance you started with. That’s the triangle inequality rearranged: \(|d(x, y) - d(x + \varepsilon, y)| \leq \varepsilon\).

In an ultrametric space, closeness is a binary hierarchical predicate (i.e. a function that returns true or false). You’re either in the same subtree at depth \(k\), or you’re not. There’s no “kind of close” — there’s “same branch” or “different branch.” For instance, distance doesn’t accumulate. In a normal metric space, distance is additive along paths. You go from \(x\) to \(y\) to \(z\), and the total distance is roughly the sum of the legs. Distance builds up as you traverse the space — each step contributes, and long routes are long because many small distances piled on top of each other. In an ultrametric, that arithmetic doesn’t happen because an ultrametric space is a binary tree. Your distance from \(x\) to \(z\) is not the sum of anything but the height of the branching point in the tree. It doesn’t matter how many intermediate nodes you pass through. The moment you cross a branch boundary at height \(h\), your distance becomes \(h\).

Let’s introduce XOR. XOR is a bitwise operation on binary numbers. Here’s the whole rule:

| bit \(a\) | bit \(b\) | \(a \oplus b\) |

|---|---|---|

| 0 | 0 | 0 |

| 0 | 1 | 1 |

| 1 | 0 | 1 |

| 1 | 1 | 0 |

Think of it as two light switches: if they’re in the same position, the output is off. If they differ, the output is on. Same → 0. Different → 1.

For multi-bit numbers, apply this rule to each bit position independently. Let’s work out \(5 \oplus 3\) by hand:

5 = 0 1 0 1

3 = 0 0 1 1

-------

5 ⊕ 3 = 0 1 1 0 = 6

That’s XOR. It measures disagreement between bits.

Now, can we use XOR as a distance? Let’s check.

Define:

\[d_\oplus(x, y) = 2^{\text{position of the highest bit where } x \text{ and } y \text{ differ}} \tag{2}\]Or equivalently: compute \(x \oplus y\), then take \(2^{\lfloor \log_2(x \oplus y) \rfloor}\) — the value of the most significant set bit of the XOR.

For example, verify:

| pair | \(x \oplus y\) | binary | highest bit | \(d_\oplus\) |

|---|---|---|---|---|

| \(d_\oplus(5,\ 6)\) | \(3\) | \(0011\) | bit 1 | \(2^1 = 2\) |

| \(d_\oplus(6,\ 14)\) | \(8\) | \(1000\) | bit 3 | \(2^3 = 8\) |

| \(d_\oplus(5,\ 5)\) | \(0\) | \(0000\) | — | \(0\) |

Note: the astute reader might have noticed that the raw integer value \(x \oplus y\) is not an ultrametric because it fails for triples like \((5, 6, 3)\) where \(5 \oplus 3 = 6 > \max(5 \oplus 6, 6 \oplus 3) = \max(3, 5)\). The fix is — as we’ve seen above — to use the highest-bit value, which rounds each XOR down (notice the floor op) to the largest power of 2 that doesn’t exceed it. Make sure this is clear.

Does \((2)\) satisfy the three metric rules? Definitely. But it satisfies something stronger: the ultrametric inequality we defined in \((1)\). Why? Because at the bit level, the highest bit where \(x\) and \(z\) disagree must already be a bit where either \(x\) and \(y\) disagree, or \(y\) and \(z\) disagree. If both \(y\) agreed with \(x\) and \(z\) on that bit, then \(x\) and \(z\) would have to agree on it too — that’s a contradiction.

Now, are some weird consequences of these facts: two balls are always either disjoint or one contains the other. In Euclidean space, two circles can easily form a Venn diagram where they share some area but not all of it. In an ultrametric space, this is mathematically impossible. And every point inside a ball is equally a center of that ball. Moreover, every interior point sees the exact same set of neighbours. The geometry has no “edges” — only “inside” and “outside.” Here’s your ball in an ultrametric space (wait, you thought it’s gonna be a round physical sphere?):

Let’s now think a bit about closeness — what does XOR-closeness actually mean?

Two numbers are close when their XOR is small — i.e., when the most significant differing bit is low. That means their leading (high-order) bits agree.

Take 4-bit integers and look at who is close to 0000:

| number | \(d_\oplus\) from 0000 |

cluster |

|---|---|---|

0000 |

\(0\) | ← center |

0001 |

\(2^0 = 1\) | \(B(0000, 1) = \{0000, 0001\}\) |

0010 |

\(2^1 = 2\) | |

0011 |

\(2^1 = 2\) | \(B(0000, 2) = \{0000, \ldots, 0011\}\) |

0100 |

\(2^2 = 4\) | |

0101 |

\(2^2 = 4\) | |

0110 |

\(2^2 = 4\) | |

0111 |

\(2^2 = 4\) | \(B(0000, 4) = \{0000, \ldots, 0111\}\) |

1000 |

\(2^3 = 8\) | |

1001 |

\(2^3 = 8\) | |

1010 |

\(2^3 = 8\) | |

1011 |

\(2^3 = 8\) | |

1100 |

\(2^3 = 8\) | |

1101 |

\(2^3 = 8\) | |

1110 |

\(2^3 = 8\) | |

1111 |

\(2^3 = 8\) | \(B(0000, 8) = \{0000, \ldots, 1111\}\) |

The cluster column closes at the last member of each ball — so you can read the table as a sequence of nested containments: each ball swallows the previous one whole, and the groups double in size at each step (1, 2, 4, 8 members).

Now forget XOR entirely. Here’s a completely different question. What if we defined “closeness” by shared trailing digits instead?

Let’s say two integers are “close” if their difference is divisible by a high power of 2. That is:

| pair | difference | highest power of 2 dividing it | close? |

|---|---|---|---|

| \(4,\ 12\) | \(8 = 2^3\) | \(2^3\) | yes |

| \(3,\ 7\) | \(4 = 2^2\) | \(2^2\) | yes |

| \(1,\ 2\) | \(1\) | \(2^0\) | no |

In this bizarre new metric, larger powers mean smaller distances. The key quantity is: how many times does 2 divide a number? Let’s give it a name — the 2-adic valuation \(v_2(n)\):

\[v_2(n) = \text{trailing zeros in the binary representation of } n \tag{3}\]Some examples:

| \(n\) | binary | \(v_2(n)\) |

|---|---|---|

| \(8\) | \(1000_2\) | \(3\) |

| \(7\) | \(0111_2\) | \(0\) |

| \(6\) | \(0110_2\) | \(1\) |

| \(5\) | \(0101_2\) | \(0\) |

| \(0\) | — | \(\infty\) |

Now, let’s define the 2-adic metric as:

\[d_2(x, y) = 2^{-v_2(x - y)} \tag{4}\]Small \(d_2\) means \(v_2(x-y)\) is large, which means \(x - y\) is divisible by a high power of 2, which means \(x\) and \(y\) agree on many trailing bits.

Let’s check who is close to \(0\) under this metric (all 4-bit integers, sorted by distance):

| number | \(d_2\) from 0000 |

cluster |

|---|---|---|

0000 |

\(0\) | ← center |

1000 |

\(2^{-3} = 0.125\) | \(B(0000, 0.125) = \{0000, 1000\}\) |

0100 |

\(2^{-2} = 0.25\) | |

1100 |

\(2^{-2} = 0.25\) | \(B(0000, 0.25) = \{0000, \ldots, 1100\}\) |

0010 |

\(2^{-1} = 0.5\) | |

0110 |

\(2^{-1} = 0.5\) | |

1010 |

\(2^{-1} = 0.5\) | |

1110 |

\(2^{-1} = 0.5\) | \(B(0000, 0.5) = \{0000, \ldots, 1110\}\) |

0001 |

\(2^{0} = 1\) | |

0011 |

\(2^{0} = 1\) | |

0101 |

\(2^{0} = 1\) | |

0111 |

\(2^{0} = 1\) | |

1001 |

\(2^{0} = 1\) | |

1011 |

\(2^{0} = 1\) | |

1101 |

\(2^{0} = 1\) | |

1111 |

\(2^{0} = 1\) | \(B(0000, 1) = \{0000, \ldots, 1111\}\) |

The cluster column closes at the last member of each ball — same nested-containment structure as XOR, balls doubling in size at each step (2, 4, 8, 16 members).

But notice: 1000 is the closest to 0000 because they share three trailing zeros, yet they differ in their leading bit and are maximally far under the XOR metric.

The 2-adic metric also satisfies the ultrametric inequality, with the same alien geometry — disjoint-or-nested balls, every interior point is a center, etc. Verify that this is true.

Before we move on, a brief historical note. The p-adic numbers were invented by Kurt Hensel in 1897. He introduced them in a paper that year and expanded the theory in his 1908 book Theorie der algebraischen Zahlen. The motivation was analogy with power series in complex analysis: just as you can expand a function as \(\sum a_n x^n\), Hensel wanted to expand integers as \(\sum a_n p^n\).

Here are both metrics side by side for all 4-bit integers 0–15. Let’s put them in order and watch the two clusterings diverge:

| number | \(d_\oplus\) from 0000 |

\(d_2\) from 0000 |

|---|---|---|

0000 |

\(0\) | \(0\) |

0001 |

\(2^0 = 1\) | \(1.000 = 2^{0}\) |

0010 |

\(2^1 = 2\) | \(0.500 = 2^{-1}\) |

0011 |

\(2^1 = 2\) | \(1.000 = 2^{0}\) |

0100 |

\(2^2 = 4\) | \(0.250 = 2^{-2}\) |

0101 |

\(2^2 = 4\) | \(1.000 = 2^{0}\) |

0110 |

\(2^2 = 4\) | \(0.500 = 2^{-1}\) |

0111 |

\(2^2 = 4\) | \(1.000 = 2^{0}\) |

1000 |

\(2^3 = 8\) | \(0.125 = 2^{-3}\) |

1001 |

\(2^3 = 8\) | \(1.000 = 2^{0}\) |

1010 |

\(2^3 = 8\) | \(0.500 = 2^{-1}\) |

1011 |

\(2^3 = 8\) | \(1.000 = 2^{0}\) |

1100 |

\(2^3 = 8\) | \(0.250 = 2^{-2}\) |

1101 |

\(2^3 = 8\) | \(1.000 = 2^{0}\) |

1110 |

\(2^3 = 8\) | \(0.500 = 2^{-1}\) |

1111 |

\(2^3 = 8\) | \(1.000 = 2^{0}\) |

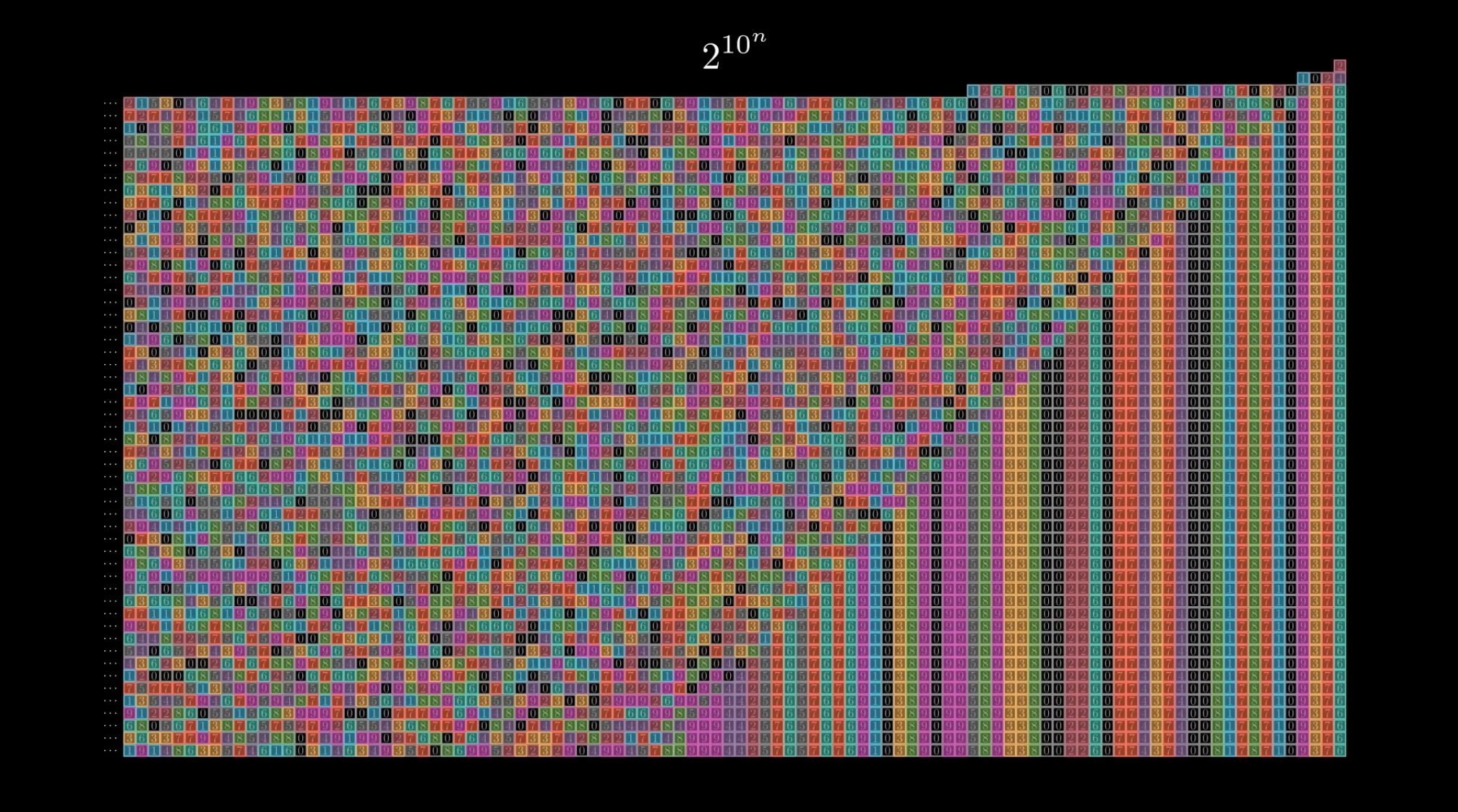

That table encodes a very pleasing visual representation, which I’ll render below. Before we look at the picture, I need one word: coset.

A coset is just a shifted copy of a repeating pattern. Take “all multiples of 4” within 0–15: that’s {0, 4, 8, 12}. Now shift it by 1: {1, 5, 9, 13}. Shift by 2: {2, 6, 10, 14}. Shift by 3: {3, 7, 11, 15}. We just produced four cosets.

Why do we care here? Because the balls of an ultrametric are cosets. Every number inside a 2-adic ball of radius \(2^{-k}\) is within \(2^{-k}\) of every other number in that ball — which means all their pairwise differences are divisible by \(2^k\) — which means they all belong to the same residue class modulo \(2^k\). That’s a coset of the subgroup of multiples of \(2^k\). The same is true for XOR: every ball is a coset of the subgroup of numbers sharing the same leading \(k\) bits.

So the colors in the picture below are cosets. Each color = one coset of some subgroup. The two panels show the two completely different coset structures that the two metrics induce on the same 16 numbers.

Let’s start by looking at the XOR panel first. It’s now obvious that the table’s rows form solid blocks: 0–3 share one color, 4–7 another, 8–11 another, and lastly, 12–15 another. They represent contiguous blocks. All numbers that share their leading bits land together, and the blocks tile the number line without gaps or interleaving.

Now look at the 2-adic panel. The colors alternate: 0 and 1 are different shades, 2 and 3 are different, and the pattern keeps splitting as we move to the right. We immediately notice that even and odd numbers belong to different groups. But what’s peculiar is that then within the evens, multiples of 4 and non-multiples split again. Then multiples of 8 split off. The groups aren’t contiguous ranges — they’re scattered across the number line in a fractal, self-similar pattern. Who ordered that?

In the picture you might notice the legend saying things like \(n \equiv 2 \pmod{4}\).

That’s because two numbers share \(k\) trailing bits in binary if and only if their difference is divisible by \(2^k\) — which is just \(x \equiv y \pmod{2^k}\).

“Sharing trailing bits” and “being congruent modulo a power of 2” are cast in the same mould.

So, looking at \(n \equiv 2 \pmod{2^2}\) picks out numbers whose last two bits are 10: that’s 2, 6, 10, 14.

Because we are measuring their distance from 0, we look at how many trailing bits they share with 0000 — which happens to be exactly their number of trailing zeros.

They each have exactly one trailing zero, so their 2-adic distance from 0 is \(2^{-1} = 0.5\).

Here’s one last cool part.

The same 16 numbers encode two completely alien notions of “neighborhood.”

1000 (= 8) is the most extreme example: it is tied for farthest from 0000 under XOR (left-to-right, MSB first), but is the uniquely closest number under 2-adic (right-to-left, LSB first) in this 4-bit universe.

One can formally prove the connection, but I’m gonna leave that as an exercise to the reader.

Anyway, isn’t this beautiful? I hope from now on you’ll always be thinking about trailing bits whenever you deal with a modulo!